Developing Tools for a Trustworthy Internet

We build Nima, the content moderation platform that protects online platforms and their users. Designed by our engineers and tech policy experts, it’s ready to scale and streamline T&S workflows.

Unpacking Regulation and Mitigating Risks

We design solutions that simplify T&S, manage risks, and ensure compliance with global regulations, including the EU Digital Services Act and the UK Online Safety Act, for online platforms.

Driving Innovation with Diverse Talent

We bring cross-functional expertise in Trust & Safety - spanning product, engineering, policy, and risk management - backed by years of experience in the space.

Trusted by industry leaders

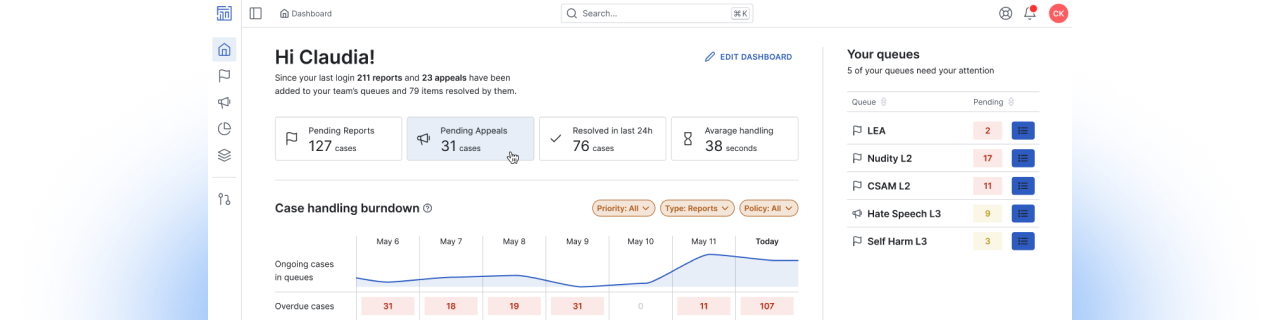

Nima, the Trust & Safety Platform

Moderate content in a single click by centralising all data signals and detection sources, enabling manual and automated processing

Experts in Operations & Regulations

Our team of professionals with deep expertise in Trust & Safety, Tech Policy, and Risk Management is ready to help your online service

Our impact on Trust & Safety

M+

Monthly Pieces of Content Moderated

Trusted at scale to safeguard online communities and protect users.

%+

Moderation Efficiency

Cutting the number of clicks and steps to moderate harmful content.

%+

Cost Savings

Focus on the operations that matter and streamline your system.

Our Latest Insights

We’re here to help, let’s start the conversation

We work with ambitious leaders who want to define the future, not hide from it. Together, we achieve extraordinary outcomes.

They talk about us