InsightTrust & SafetyNima

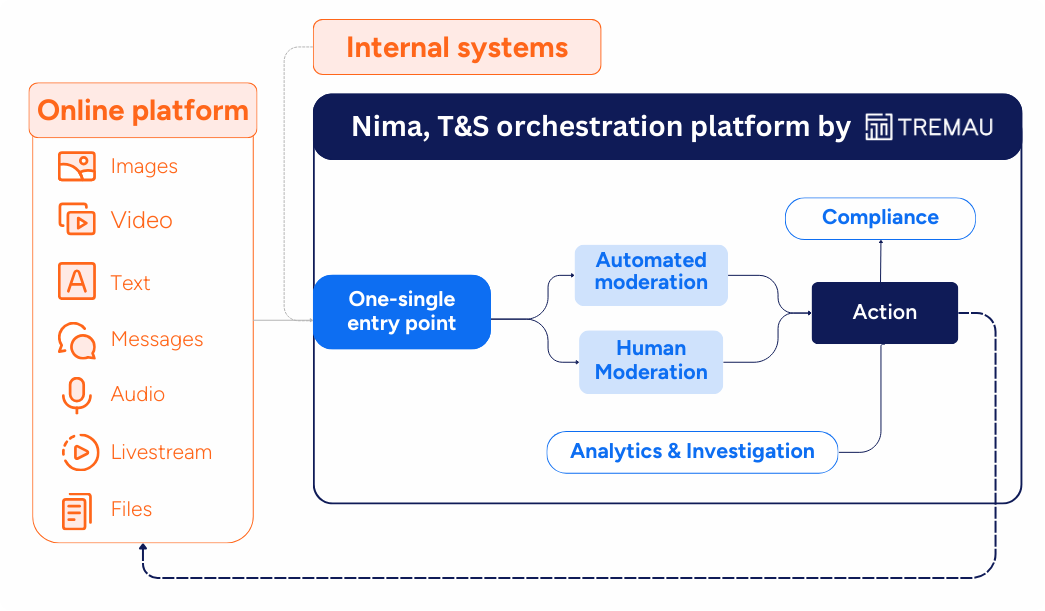

Building Fair and Scalable Trust & Safety Enforcement Systems

Over the past decade, T&S has cemented its position as a core business enabler and a must-have for all online platforms. This is evermore true in today's rapid and constantly changing online environment. New threat vectors - from coordinated scams and harassment to GenAI-enabled abuse - are emerging alongside an exponential increase in content volumes, often outpacing platforms’ ability to scale legacy moderation systems. At the same time, platforms must operate in an increasingly complex regulatory and political landscape internationally.

Recommended

Check the fairness and scalability of your Trust & Safety operations

As online platforms scale, Trust & Safety operations face increasing pressure to balance speed, consistency, and explainability. Rising content volumes, new abuse patterns, and growing regulatory scrutiny mean enforcement systems must operate efficiently, without compromising fairness or user trust. Download the checklist to see how your Trust & Safety operations measure up against industry best practices today.Beyond the cost centre: articulating the business value of Trust & Safety

What T&S investment protects, enables, and de-risks. Gone are the days when Trust & Safety (T&S) teams were seen as a costly back-office support. There is a broad acknowledgement that the work is complex and challenging. Yet, T&S still struggles to articulate its real ROI. This article breaks down the ways T&S drives tangible business value through several critical pillars: user retention, product development, regulatory resilience and financial performance.The Evolution of T&S: A Personal Perspective on Why This Work Matters More Than Ever

Insights from Julie de Bailliencourt, former Global Head of Product Policy for TikTok, now Director of Trust & Safety at Tremau